9/16/2020

Got two stages up and running yesterday on NDisplay. One of which, Reddot, was where we shot our first tests. This is exciting news, and great for the development side of things. Now that we confidently have assisted in launching two of these stages, we can be sure on the day we will be able to get what we need.

In terms of The Blight, this means that Evan and Justin will be able to start speaking about the practical elements of the design, and how best to utilize staging to sell our backdrops. We are on track for the early December Shoot!

Got two stages up and running yesterday on NDisplay. One of which, Reddot, was where we shot our first tests. This is exciting news, and great for the development side of things. Now that we confidently have assisted in launching two of these stages, we can be sure on the day we will be able to get what we need.

In terms of The Blight, this means that Evan and Justin will be able to start speaking about the practical elements of the design, and how best to utilize staging to sell our backdrops. We are on track for the early December Shoot!

9/3/2020

This week has been a development leap for the team. Currently were fortunate enough to get time on a 8m x 2.5m wall at a 2.5mm density pixel pitch. Currently slotted for tests on a projector setup on a white cyclorama next week, to compare the pros and cons of each approach.

This week has been a development leap for the team. Currently were fortunate enough to get time on a 8m x 2.5m wall at a 2.5mm density pixel pitch. Currently slotted for tests on a projector setup on a white cyclorama next week, to compare the pros and cons of each approach.

It is looking more likely that for the actual shoot we would not end up with a full LED 'cave', which would mean more traditional lighting methods for the foreground subjects and practical elements. With that in mind, and seeing the potential moire issues with a wider pixel pitch, we may end up on a projector setup regardless of the availability of an LED screen. Granted I could be eating my words come December, but that is what testing is for!

Currently walking back from a test scene running on my RTX 2070 with Quixel 8k textures down to a more usable FPS. Technically it is running at 18-19 fps currently, which almost matches our camera's frame rate of 24 fps, but I would prefer to have a healthy margin to allow for any sporadic LOD hits .

Also noticing limitations with a 90 degree wall setup. Not to look a gift horse in the mouth in regards to the current screen we have access to, but we had known the potential issues with this harsh of a change in geometry. If we were not using camera re-projection, and had a static realtime scene it would be one thing, but not knowing what could land on the seam makes it antithetical to our filming approach.

Also noticing limitations with a 90 degree wall setup. Not to look a gift horse in the mouth in regards to the current screen we have access to, but we had known the potential issues with this harsh of a change in geometry. If we were not using camera re-projection, and had a static realtime scene it would be one thing, but not knowing what could land on the seam makes it antithetical to our filming approach.

8/23/2020

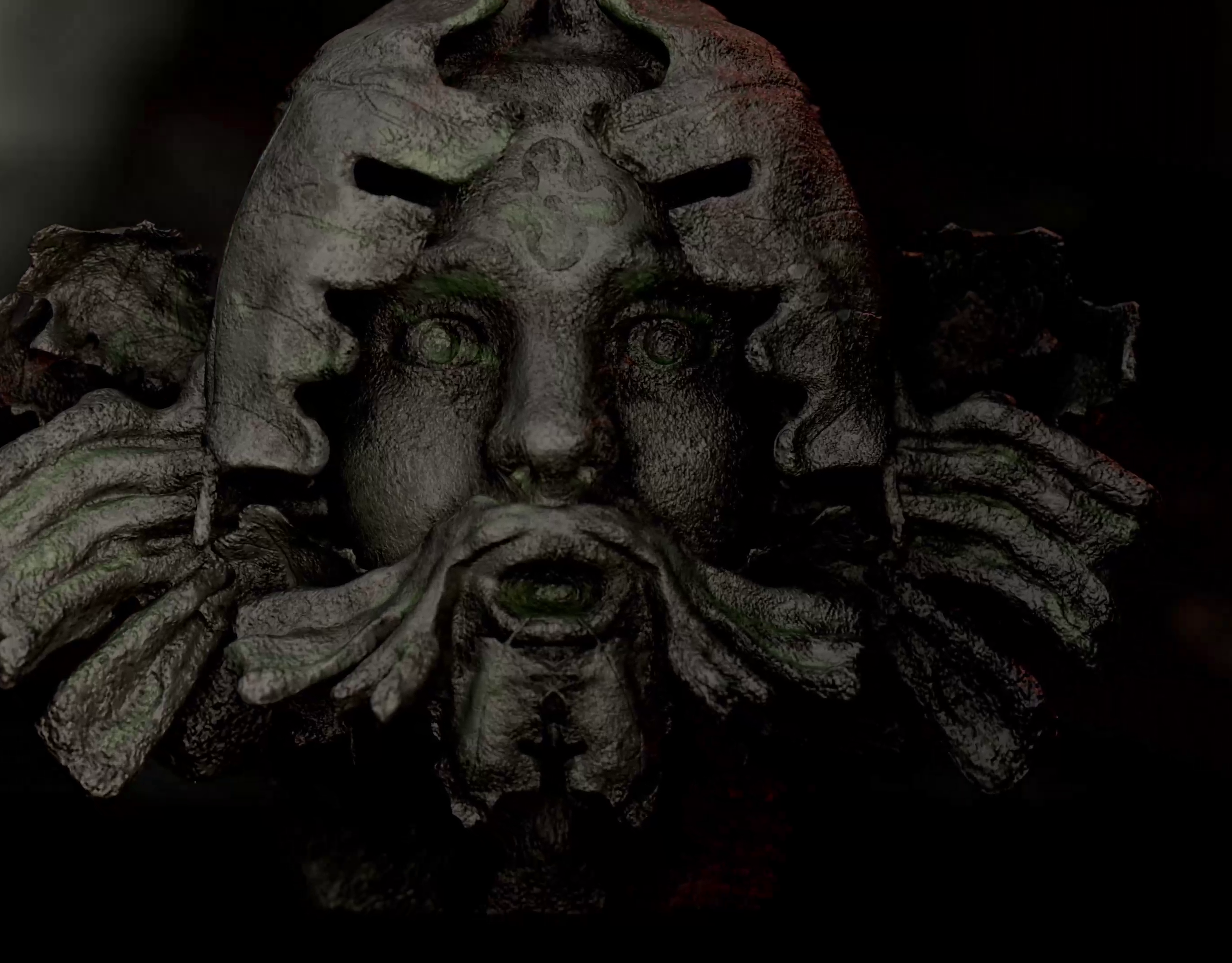

Taking this week to brush off more organic modelling skills, Zbrush in particular. Usually I stay in Rhino, because of the Nurbs based models' accuracy for construction. Now knowing that the end product will never exist as a physical object gives a large degree of freedom in describing the object to a series of artists.

Even a sculptor working in a see of high density foam won't be able to achieve the accuracy that a digital artists can crank out in a few hours.

Taking this week to brush off more organic modelling skills, Zbrush in particular. Usually I stay in Rhino, because of the Nurbs based models' accuracy for construction. Now knowing that the end product will never exist as a physical object gives a large degree of freedom in describing the object to a series of artists.

Even a sculptor working in a see of high density foam won't be able to achieve the accuracy that a digital artists can crank out in a few hours.

8/17/2020

Starting off this production blog mostly to keep track of the personal development. At the moment the blog is being helmed by the Production Designer, Evan Welch.

My main goal with pursuing virtual production for this project is taking the digital visions that we are usually only able to achieve with concept art on this level all the way through the final film. So often the constraints of time, money, or whatever myriad of reasons that limit a production stop a smaller crew from achieving something that is within their grasp. Making the best of an industry standstill has allowed us to reach for the stars on this project, especially with production currently being months away in December. We can put Justin, our director into VR to get a feel for the space long before a construction team would ever be able to place one screw.

Starting off this production blog mostly to keep track of the personal development. At the moment the blog is being helmed by the Production Designer, Evan Welch.

My main goal with pursuing virtual production for this project is taking the digital visions that we are usually only able to achieve with concept art on this level all the way through the final film. So often the constraints of time, money, or whatever myriad of reasons that limit a production stop a smaller crew from achieving something that is within their grasp. Making the best of an industry standstill has allowed us to reach for the stars on this project, especially with production currently being months away in December. We can put Justin, our director into VR to get a feel for the space long before a construction team would ever be able to place one screw.